ISF (Interactive Shader Format) is a wonderful open standard — it gave the VJ world a portable shader format and an enormous community library. But it's a JSON-described GLSL fragment shader, executed by a host that often runs OpenGL or a Metal compatibility translation. The host pays for parsing, parameter binding, and context-switching every frame.

RenderWave's shaders skip that entire layer. Every kernel is a Metal compute function authored against the Apple Silicon GPU directly. The runtime hands the kernel zero-copy access to the previous frame, the audio analysis buffer, the parameter uniforms, and the output texture. There's no interpreter, no JSON, no GLSL-to-Metal pass.

Concretely, the dispatch loop looks like this:

// Per-frame, per-shader dispatch (simplified)

let encoder = commandBuffer.makeComputeCommandEncoder()

encoder.setComputePipelineState(shader.pipeline)

encoder.setTexture(prevFrame, index: 0)

encoder.setTexture(outputTexture, index: 1)

encoder.setBuffer(audioBands, offset: 0, index: 0) // bass / mid / treble

encoder.setBuffer(paramUniforms, offset: 0, index: 1)

encoder.dispatchThreadgroups(grid, threadsPerThreadgroup: tg)

encoder.endEncoding()

The pipeline state is built once at load and cached. Parameter changes from MIDI, audio, or the sequencer write into a single uniform buffer — they don't trigger shader recompilation. The kernel reads the audio bands as a tiny structured buffer, not as global state. That's why per-band audio reactivity stays cheap even with eight effects stacked on top.

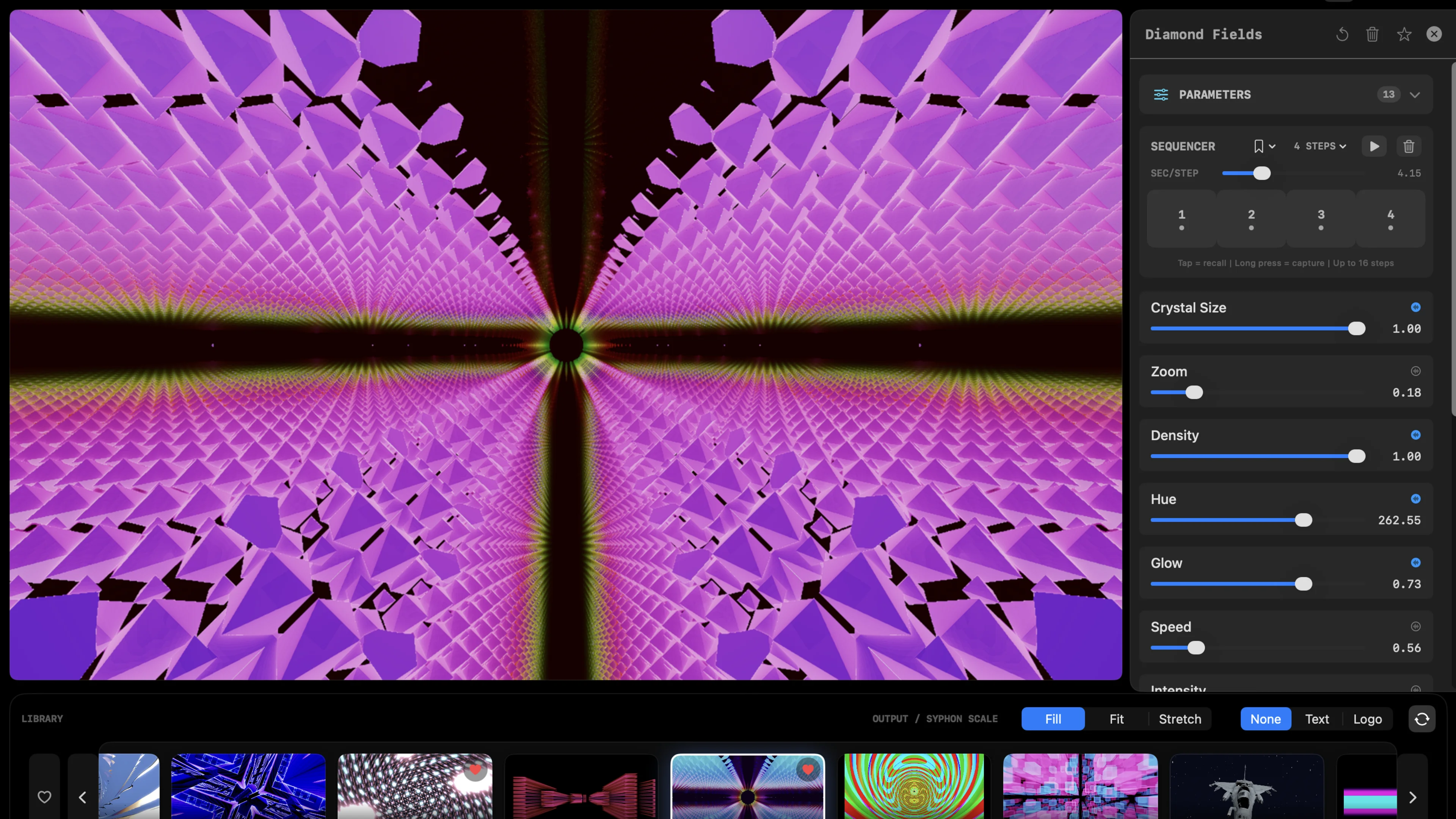

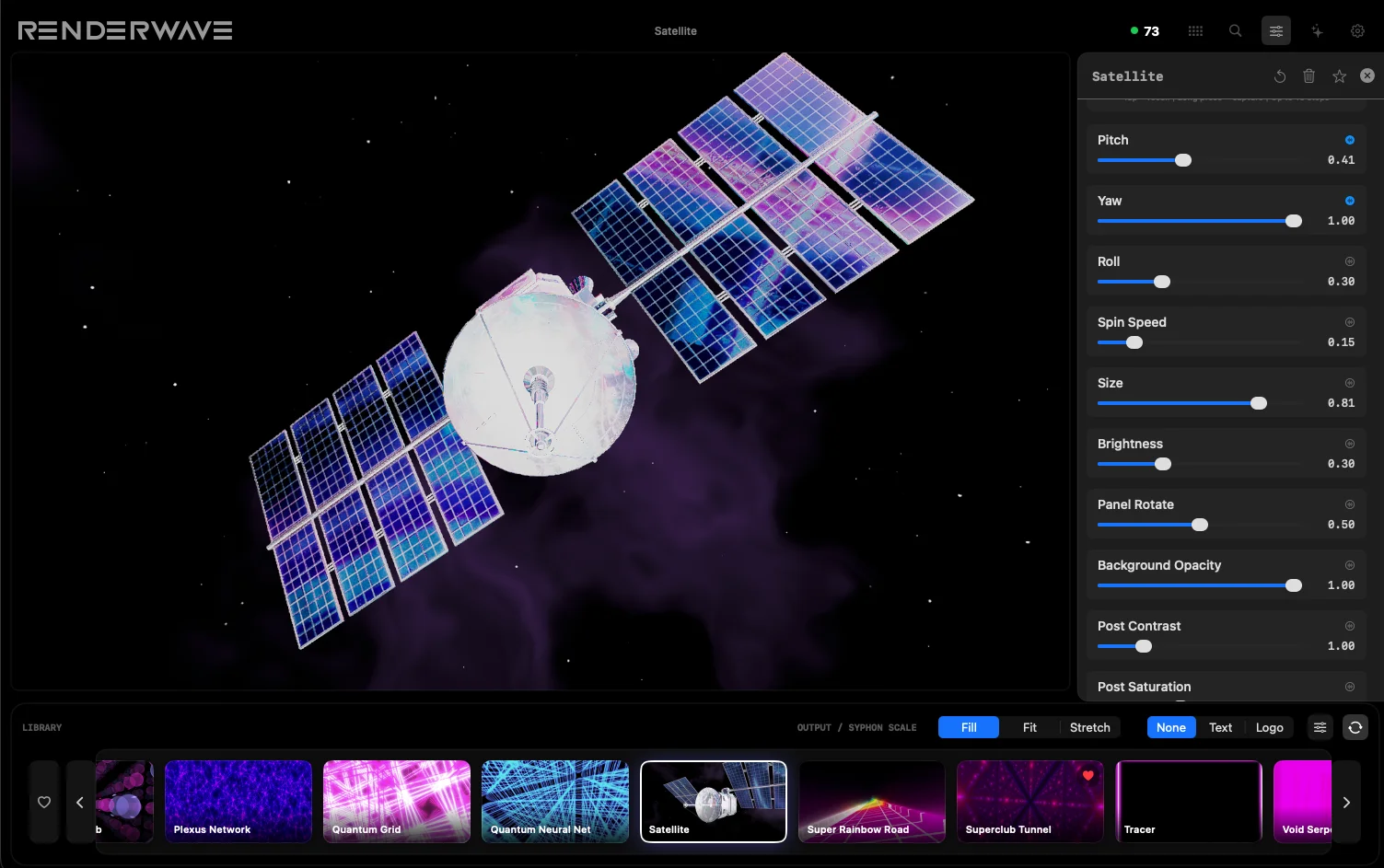

The practical result for VJs: RenderWave can hold 4K60 with the audio analyzer running, MIDI feedback active, Syphon output enabled, and three post-processing effects chained. ISF-based hosts on Mac can match the look — they just need more GPU headroom to do it, which means less budget for other effects in your stack.